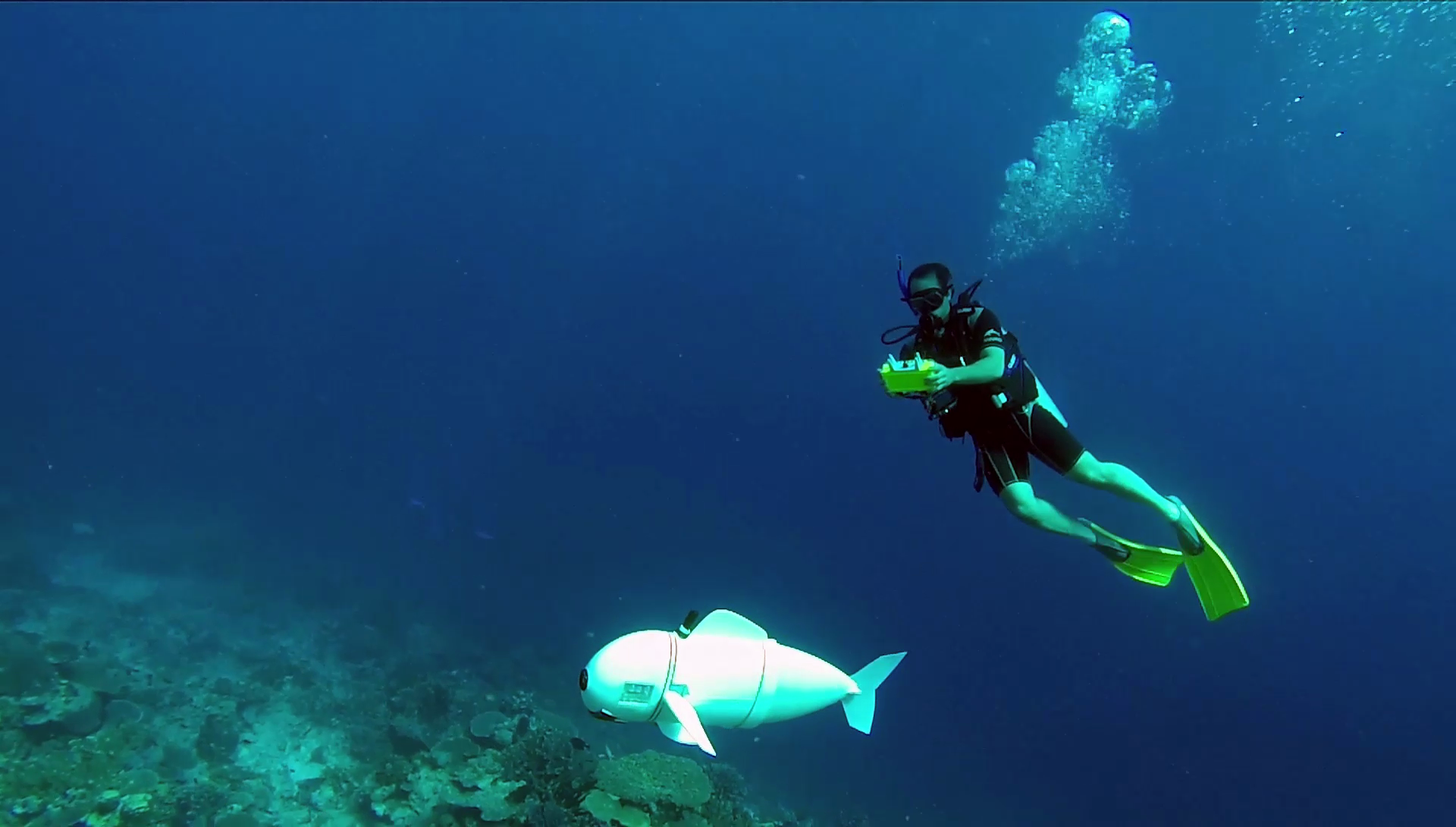

In the depths of the South Pacific, a strange new fish is exploring the Rainbow Reef. Flexing its tail from side to side to propel itself serenely along, it captures the underwater scene using a camera – with a fish-eye lens! – mounted in its head, which also contains a Raspberry Pi 2 among other electronics.

This article first appeared in The MagPi 69 and was written by Phil King

This is SoFi (pronounced ‘Sophie’), a soft-bodied robot created by researchers at MIT’s Computer Science and Artificial Intelligence Laboratory (CSAIL) to study marine life up close, without disturbing it. That ingenious tail was inspired by the biological system used in tuna fins.

“The fish’s motor pumps water into two balloon-like chambers in the tail,” explains Robert Katzschmann, lead author of the project. “These work sort of like a pair of pistons in an engine: as one chamber expands, it bends and flexes to one side.”

Natural swimmer

After working on SoFi and its predecessors for more than five years, Robert’s team have perfected a naturalistic swimming action. “SoFi can turn, speed up, slow down, and move at different depths, including in strong currents,” he reveals. “On average, SoFi swims at a speed of half a body length per second, though we plan to increase this further by improving its pump system and tweaking the design of its body and tail.”

The robot has two fins on its side that adjust its pitch for diving up and down, while its overall buoyancy is controlled by an adjustable weight compartment and a chamber that can change its density by compressing and decompressing air.

“Among some of the challenges we encountered were the strong pressures that our fish had to withstand at deeper depths (down to 18 m) and the boundaries of our acoustic communication range for commanding SoFi remotely,” says Robert. A diver uses a waterproof controller, containing another Raspberry Pi, to send commands to SoFi.

“Methods such as WiFi or Bluetooth don’t work well underwater, so we chose to use sound instead,” explains graduate student Joseph DelPreto. “The remote controller emits ultrasonic acoustic pulses that are too high-pitched for people to hear but that the robot can receive and decode to know how it should behave.”

The maximum control range is currently 20 m, but only reliable up to 10 m: “[It] could be higher but we wanted to minimise the disruption to other fish,” says Robert.

Following fish

SoFi is also able to navigate autonomously to some degree using its on-board camera. “In the future we will show how SoFi can use its vision to follow other fish,” reveals Robert. “By adding pre-recorded maps of the coral reefs onto the Raspberry Pi, we also plan to have the fish self-locate and navigate autonomously through the reefs.”

Robert says the team hope to use SoFi to study deep-sea marine life that would be hard to capture otherwise. “The fish can not only gather video, but potentially also other sensor data, as well as taking water samples. We are for example curious to take water samples of the habitats, measure the temperature, and also record the sounds marine animals emit.”