Rigoberto Moreno Delgado was, like many other college students, unsure which path to go down. After taking a class on computer science, he decided to pursue it as his major, and became interested in parallel computing.

“I began my journey into parallel and high-performance computing after my university advisor, Dr Ali Kooshesh, mentioned that my favourite professor, Dr Suzanne Rivoire, was teaching a course titled Parallel Computing,” Rigoberto explains to us. “I was sceptical about enrolling into the class since I had no idea what parallel computing was, but I decided to take the course and I did not regret my decision.”

He met Dr Barry Rountree, a researcher at a lab that specialises in supercomputing, while working as an intern at the Lawrence Livermore National Laboratory (LLNL) over the summer of 2016.

“I was inspired to build my own cluster so that I could gain more experience with a distributed system,” Rigoberto tells us. “I also needed a plan for my senior research project.”

A cluster of Pis

Rigoberto was able to submit this idea as his senior research project, and began researching. “The biggest challenge was to find a suitable, inexpensive computer that I could use as the nodes for my cluster. I originally thought about purchasing old desktops and chaining them up together to form a Beowulf cluster, but I wanted my cluster to be portable. The best choice was to use Raspberry Pis.”

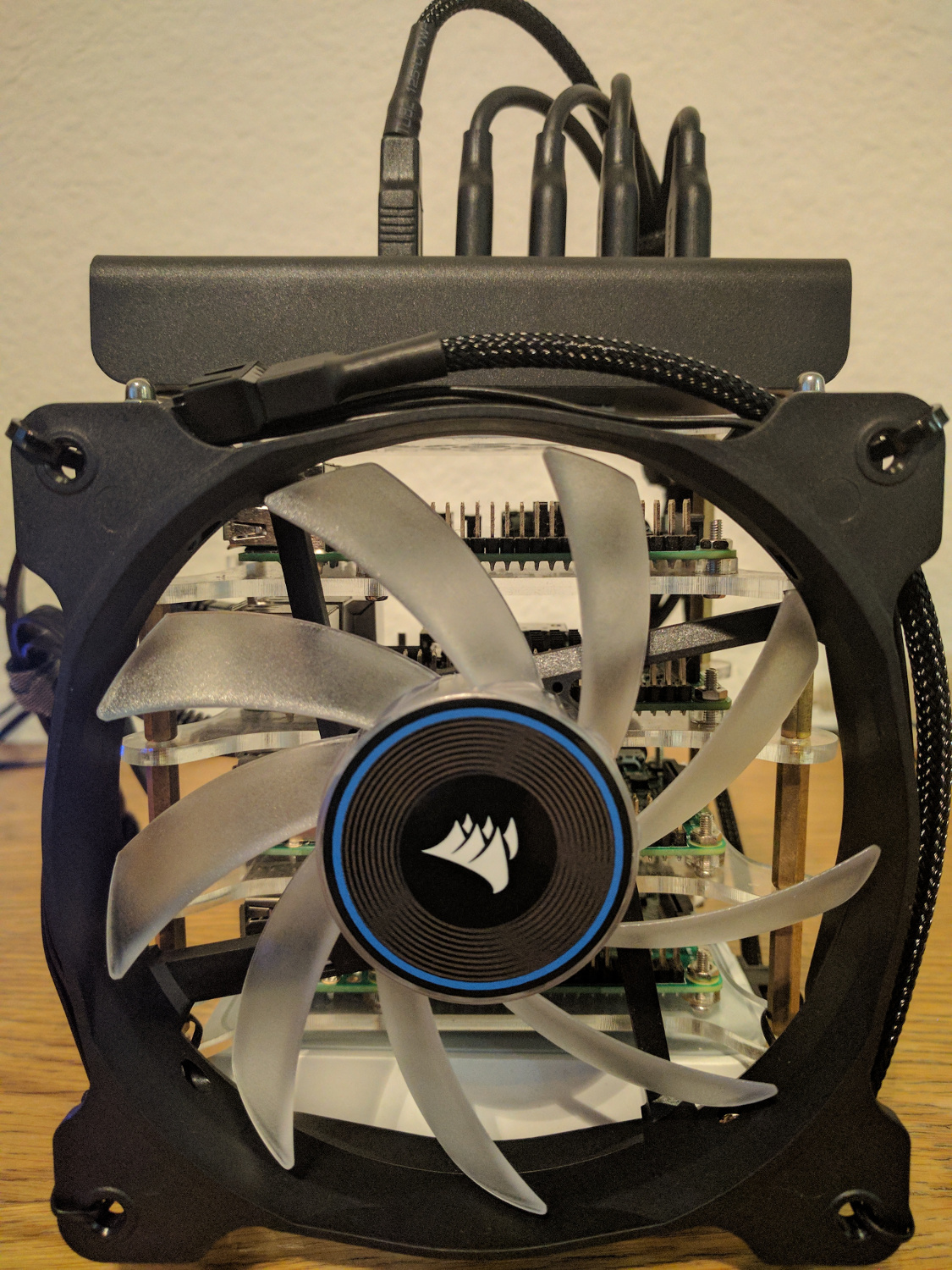

The cluster consists of a modest four Raspberry Pi 3s networked together. There’s also a cooling fan connected to the makeshift chassis for the setup, and extra heatsinks on the CPUs to help keep the system well ventilated.

“My initial thought was that the CPU on board the Raspberry Pi would be the biggest limiting factor to obtaining the best performance out of the cluster,” Rigoberto says of his experiment, “but I had high hopes for my cluster.”

Benchmarking a cluster

Rigoberto performed two major tests on his cluster, a Matrix Multiplication and an HPL (High-Performance LINPACK) benchmark.

The Matrix Multiplication benchmark involves taking two matrices of the same size and multiplying them. The benchmark works by creating two matrices of random numbers of a given size.

MPI (Message Passing Interface) is used to distribute evenly sized chunks of the matrices via Ethernet to every node/process, so that they work their chunk in parallel. Then the results are gathered for each node/process.

This benchmark benefits heavily from parallel execution, since the matrices can be broken down into smaller chunks which can then be sent via Ethernet to other nodes to be worked on in unison.

The HPL benchmark is used by the Top 500 List, which is comprised of the top 500 performing computer systems in the world. The rankings are determined by how many FLOPS (floating point operations per second) a computer can calculate.

Rigoberto was successful in earning his Computer Science degree, with this cluster as his senior project, but he’s not quite done with running benchmarks on it:

“Future work involves changing the current operating system (Raspbian Jessie Lite) to CentOS 7 and measuring performance differences, as well as optimising the kernel to better suit the cluster. I would also like to find a method to put Gigabit Ethernet on each Raspberry Pi in my cluster to measure the performance gains that a faster interconnect would present.”

Benchmark results

Matrix Multiplication tests

Run-time comparison

Test: A simple comparison of how fast the cluster runs, versus the speed of a single Raspberry Pi

Result: The Raspberry Pi cluster was slightly slower than the single Raspberry Pi

Speedup comparison

Test: A comparison of how different numbers of processes running in parallel perform

Result:The cluster is significantly worse than the Raspberry Pi on its own

Rigoberto says: “The combined resources that the cluster has make it more powerful than a single Raspberry Pi, but that does not make it faster with regard to the small-scale matrix multiplication that I used. The main reason why the cluster is not able to outperform a single Raspberry Pi is the interconnects between the nodes. Using the on-board Ethernet (10/100 Mbps) limits how quickly nodes/processes can communicate with each other.”

HPL test

Test: A pure test of computing power

Result: 3.463 GFlops

Rigoberto says: “My cluster was able to achieve a peak of 3.463 GFlops. Of course, that is nowhere close to being up to par with the systems in the Top 500 list [which can be more than a million times faster]. As you can see from the graph, most of the scores sit around 3.43 and 3.45 GFlops. The HPL benchmark greatly benefits from having fast interconnects (Ethernet in this case). Had the Raspberry Pis had faster Ethernet speeds (preferably Gigabit), the GFlops that could be obtained by the cluster would be a lot higher than they currently are.”